Mark T. Godfrey

roughly reverse-chronological portfolio

curriculum vitae (pdf)

Shred Video

Shred Video uses artificial intelligence to do the hard work of video editing so you don't have to. Just choose your videos/photos and a song from your library, and Shred Video will return a professional-quality final cut in seconds! Designed for adventure travelers and action sports athletes. I'm the co-founder and CTO.

2015-present

AutoRap

Turn speech into rap and correct bad rapping. AutoRap maps the syllables of your speech to any beat, creating a unique rap every time. Also, has a karaoke mode where your rap's rhythm is adjusted to better fit the original flow, akin to pitch-correction/Auto-Tune. I was responsible for the low-level audio algorithms and development, as well as top-level app UI development. I was also in charge of editing, annotating, and formatting the popular musical content that's pushed to the app regularly. Peaked at #3 overall in App Store, over 20M downloads.

2012-2014

ZOOZbeat

ZOOZbeat is a mobile music-making app for iPhone/iPod Touch and Nokia phones running Symbian that allows anyone to easily compose and remix music, even with no musical experience. It has been downloaded over million times. I was one of two developers who built a custom C sequencer and sampler optimized for fixed-point processors and was deeply involved in all interface and usability design. Also developed was a dynamic website in Ruby on Rails for uploading, rendering, storing, and sharing user-created songs.

2008-2010

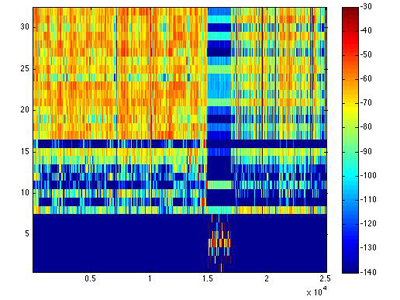

Hubs and Homogeneity

My graduate research, culminating in a master's thesis, involved experiments in improving content-based music modeling. My goal was to improve the state-of-the-art "bag of frames" methods as well as examine and address the phenomenon of "hubs", songs that appeared similar to a large number of other songs. I showed that a process of parametric model homogenization can aid in correcting the models of "anti-hubs", thus improving similarity measures for all models. I also looked into non-parametric modeling techniques (e.g. kernel density estimation) to address these issues.

2007-2008

Indian Classical Music Recommendation

Under Dr. Parag Chordia, I worked at augmenting the standard timbral feature-based music models with melodic information, especially well-suited for North Indian classic music. The ultimate goal was to create a recommendation engine that would not only offer a user songs with similar instrumentation and recording quality, but with similar melodic information, which has been shown to be closely tied with mood and emotion in this music. A dataset, nicm08, was compiled consisting of 897 tracks and annotated with tags for performer, instrumentation, raag, and recording quality.

2007-2008

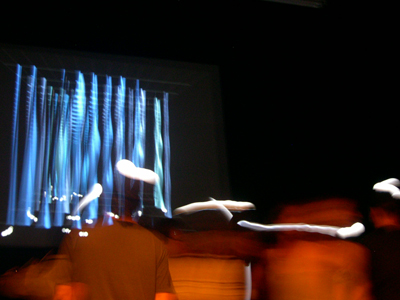

Flock

Flock is a full-evening performance piece composed by Dr. Jason Freeman for saxophone quartet, live electronics, and audience participation where music notation, electronic sound, and video animation are all generated in real-time based on the locations of musicians, dancers, and audience members as they move and interact with each other. I was responsible for developing a particle-filter based computer vision tracking system for locating each unique musician and every audience member. Performed at the Adrienne Arsht Center for the Performing Arts in Miami, FL in Dec. 2007 and at the 01SJ Biennial in San Jose, CA in June 2008.

2006-2008

Covey

Inspired by the Aeolian harp, an instrument "played" by the wind, Covey is an interactive sound installation based on the rich timbre and fluid gestural control of the harp. Computer vision technology tracks participants' locations and movements, translating them into "wind energy" to play a synthetic harp. In the same way that a force of nature excites the man-made Aeolian harp, humans are the force driving the virtual, technological instrument in Covey. I was responsible for the sound design and the computer vision software (see Flock above). Premiered at Georgia Tech Listening Machines concert, Eyedrum Gallery, Atlanta, 2007 and performed in Miami and San Jose with Flock. Selected for inclusion at the Spark Festival of Electronic Music and Arts, Minneapolis, 2008.

2006-2008

Flou

Flou is an interactive web application involving exploring 3-D worlds containing musical loop and effects objects. By collecting the loops and effects, users dynamically create their own unique temporal and spatial mix. Users are also encouraged to create their own worlds and collaborate with other users through the web. It was commissioned by New Radio and Performing Arts, Inc. for Networked Music Review. Flou was also adapted for live performance and performed at Programmable Media II: Networked Music symposium, New York, 2008 and Georgia Tech Listening Machines concert, Atlanta, 2008.

2007-2008

Haile

Haile is a robotic percussionist developed by Dr. Gil Weinberg that can listen to live players, analyze their music in real-time, and use the product of this analysis to play back in an improvisational manner. It is designed to combine the benefits of computational power and algorithmic music with the richness, visual interactivity, and expression of acoustic playing. I adapted Haile to play xylophone and worked on two pieces: Svobod for saxophonist, piano, and robot, performed at Georgia Tech with Frank Gratkowski and at ICMC07 in Copenhagen, and iltur for Haile for jazz quartet and robot, performed at Georgia Tech Listening Machines concert, Atlanta, 2007.

2007

Accessible Aquarium

Under Dr. Bruce Walker, this project's goal is to make dynamic exhibits such as those at museums, science centers, zoos and aquaria more engaging and accessible for visitors with vision impairments by providing real-time interpretations of the exhibits using innovative tracking, music, narrations, and adaptive sonification.

2006-2007

Nomen Novum

A local experimental pop music act. Live, I played electronics and keyboards and sang sometimes and did audio-reactive video projections on occasion. Not live, I helped make new sounds and manipulate the world's sounds.

2008-2010